The State of Agent Memory (2026)

what $31.5M in funding and 120K GitHub stars worth of agent memory looks like from the inside

8-min read

We were four days into reading Mem0’s source code when we found the graph mentions counter

Every time an entity gets referenced, whether a person, a project, a concept, Mem0 increments a mentions count on that node and its edges. It’s tracked on every update. Written to the database. Never read back. Never used in retrieval scoring. The data’s there, the infrastructure’s there — it just hasn’t been connected yet.

That turned out to be the recurring theme. These codebases are full of good infrastructure and good ideas, often in different places than you’d expect.

I should back up.

Why we did this

We’re building Mneme, a proactive engineering intelligence system, where it watches your team’s signals (commits, PRs, Slack threads, Jira tickets, error spikes, stripe txns) and synthesizes context before decisions get made. To build that, we needed to understand what already exists in the memory space. Blog posts or READMEs only get you so far, so we dove into the actual source code.

So we cloned 10 of the most relevant repos and read through them (read: with some heavy lifting from Claude Code). The list~

Graphiti (23K stars),

Mem0 (48K stars, $24M raised),

Cognee (12.5K stars, $7.5M seed),

Letta (21K stars),

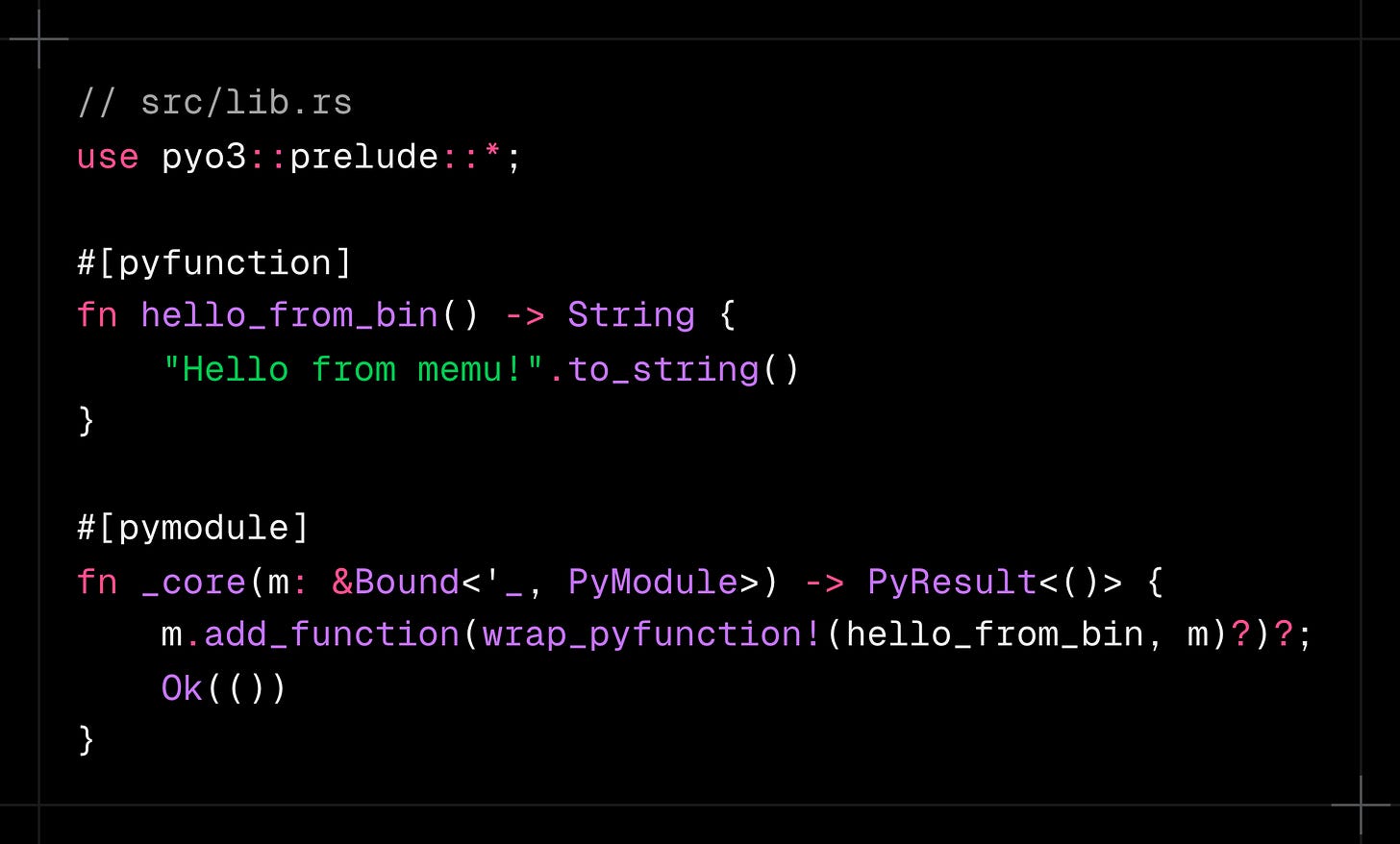

memU (11K stars),

SimpleMem (3K stars),

mcp-memory-service (1.4K stars),

A-Mem (800 stars),

memsearch (612 stars), and

claude-cognitive (310 stars)

Over 120K GitHub stars and $31.5M in venture funding aimed at a single thesis: LLMs need persistent memory to be useful.

They’re right about the thesis. The disagreement is about how to get there. And we have obvious bias since we’re building in this space. The research is real, but the interpretation is ours. Keep that in mind.

Three approaches

The approaches fall into three paradigms~

System-managed extraction: the system decides what to remember

Mem0, Graphiti, Cognee fall into this bucket: expensive writes, clean output

Agent self-management: the agent decides what to remember

Letta’s MemGPT holds its own here - elegant, and depends on agent discipline

Compression and retrieval: the system compresses conversation history

SimpleMem, memsearch, mcp-memory-service, claude-cognitive - all trade fidelity for token efficiency

Most production systems will need some mix of all three. But what I found more interesting than the paradigm choices was what happened when I looked at the actual implementations.

What we found when we read the code

The gap between marketing and implementation runs in both directions. Both directions are worth seeing.

Graphiti’s bi-temporal model is as good as advertised. This is from graphiti_core/edges.py:

class EntityEdge(Edge):

# Edge base class provides: uuid, source_node_uuid, target_node_uuid, created_at

name: str = Field(description='name of the edge, relation name')

fact: str = Field(description='fact representing the edge and nodes that it connects')

fact_embedding: list[float] | None = Field(default=None)

episodes: list[str] = Field(default=[])

expired_at: datetime | None = Field(

default=None, description='datetime of when the node was invalidated'

)

valid_at: datetime | None = Field(

default=None, description='datetime of when the fact became true'

)

invalid_at: datetime | None = Field(

default=None, description='datetime of when the fact stopped being true'

)Four datetime fields. valid_at and invalid_at track when a fact was true in the real world. created_at and expired_at track when the system learned and retired the fact. When a new fact contradicts an old one, the old edge gets invalid_at set to the new edge’s valid_at. Nothing is ever deleted. This enables a query you can’t do anywhere else: “What did we know about X at time T?”